| Recognition from Hand Cameras |  |

VSLAB |

|

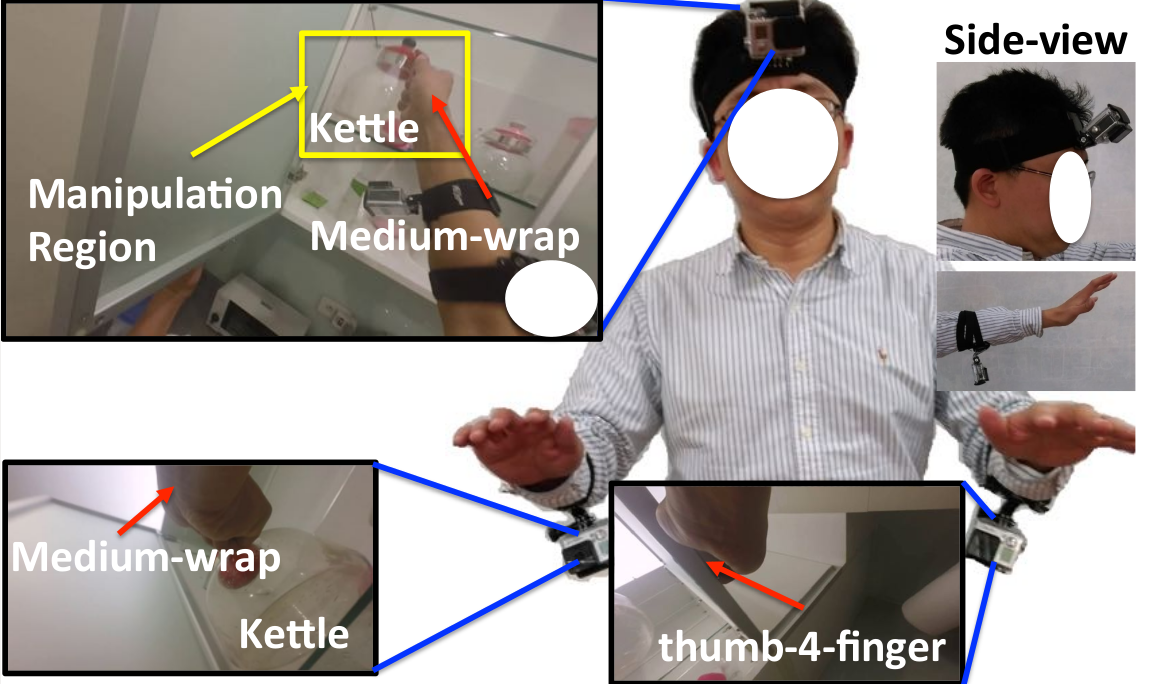

Recently, the technological advance of wearable devices has led to significant interests in recognizing human behaviors in daily life (i.e., uninstrumented environment). Among many devices, egocentric camera systems have drawn significant attention, since the camera is aligned with the field-of-view of wearer, it naturally captures what a person sees. These systems have shown great potential in recognizing daily activities(e.g., making meals, watching TV, etc.), estimating hand poses, generating howto videos, etc. Despite many advantages of egocentric camera systems, there exists two main issues which are much less discussed. Firstly, hand localization is not solved especially for passive camera systems. Even for active camera systems like Kinect, hand localization is challenging when two hands are interacting or a hand is interacting with an object. Secondly, the limited field-of-view of an egocentric camera implies that hands will inevitably move outside the images sometimes. We propose HandCam (Fig. 1), a novel wearable camera capturing activities of hands, for recognizing human behaviors. HandCam has two main advantages over egocentric systems : (1) it avoids the need to detect hands and manipulation regions; (2) it observes the activities of hands almost at all time. By taking advantage of these properties, we propose a deep-learning-based method to recognize hand states (free vs. active hands, hand gestures, object categories). Moreover, we propose a novel two-streams deep network to further take advantage of both HandCam and HeadCam. |

|

| Dataset(download) |

|

We have collected a new synchronized HandCam and HeadCam dataset with 20 videos captured in three scenes for hand states recognition. |

| Demo | |

|

Here is a video demo for our hand-camera system.There are four scenes in this synchronize video.

Left-top is the view-point of hand-camera, we use the images from this camera to predict the object categories. We use the rest of scenes to record the user's actions in different view-point. Left-bottom is the camera mounted on user's arm. Right-bottom is the camera mounted on user's head. left-top is a fixed camera at another view-point. |

|

Publications

Contact : Cheng-Sheng Chan Last update : August 27th, 2016

|