Abstract

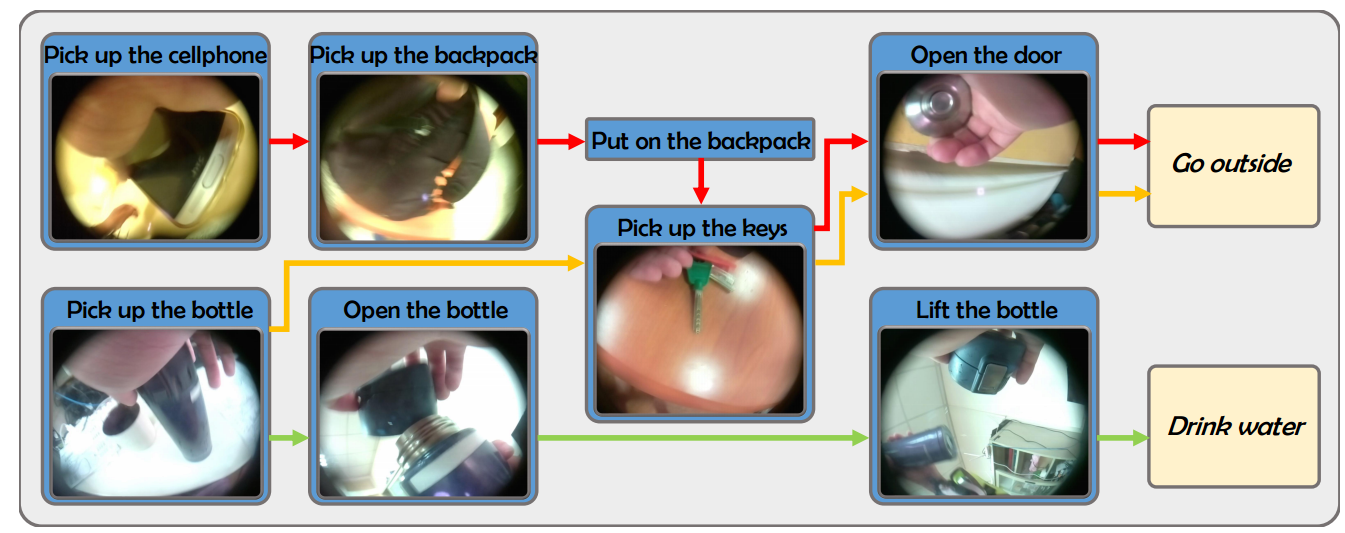

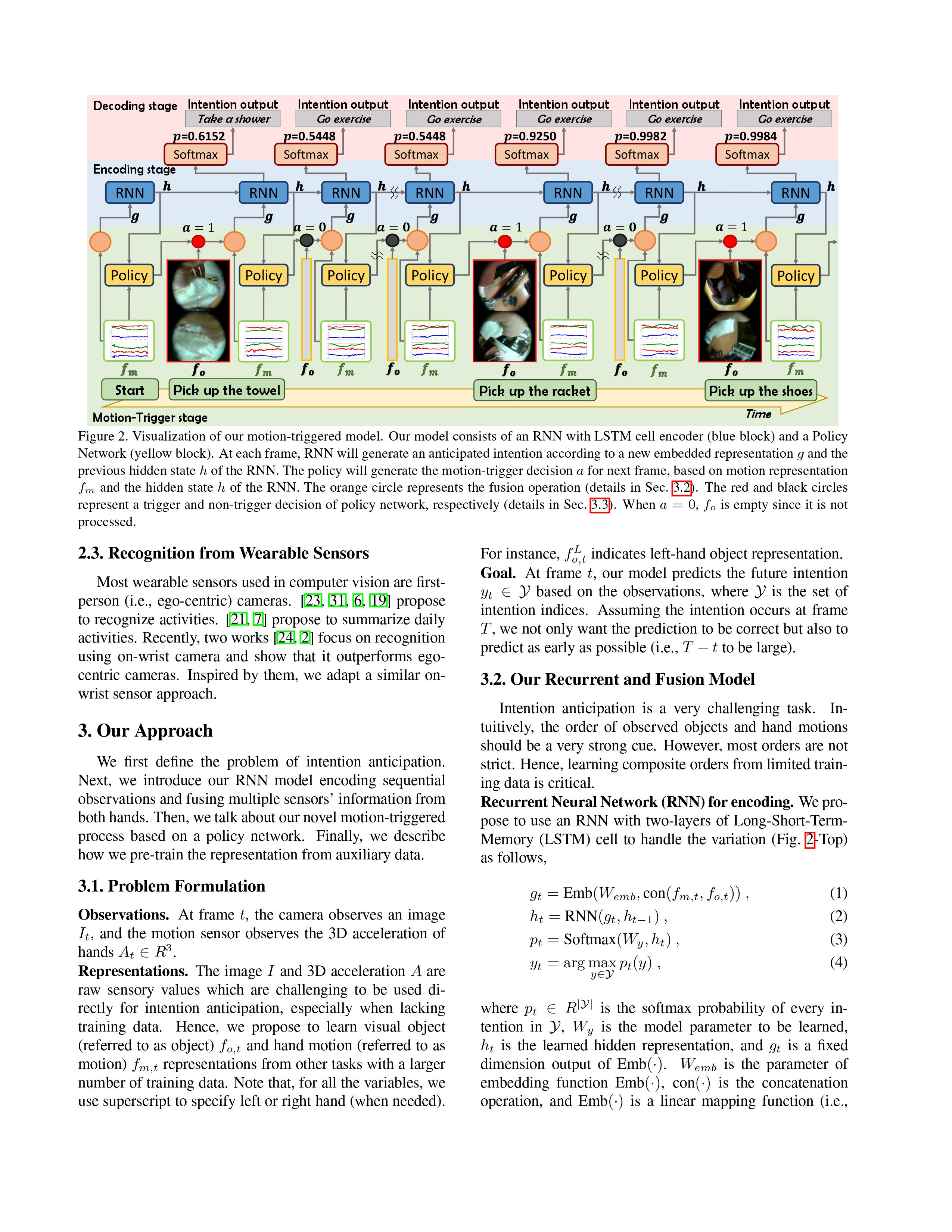

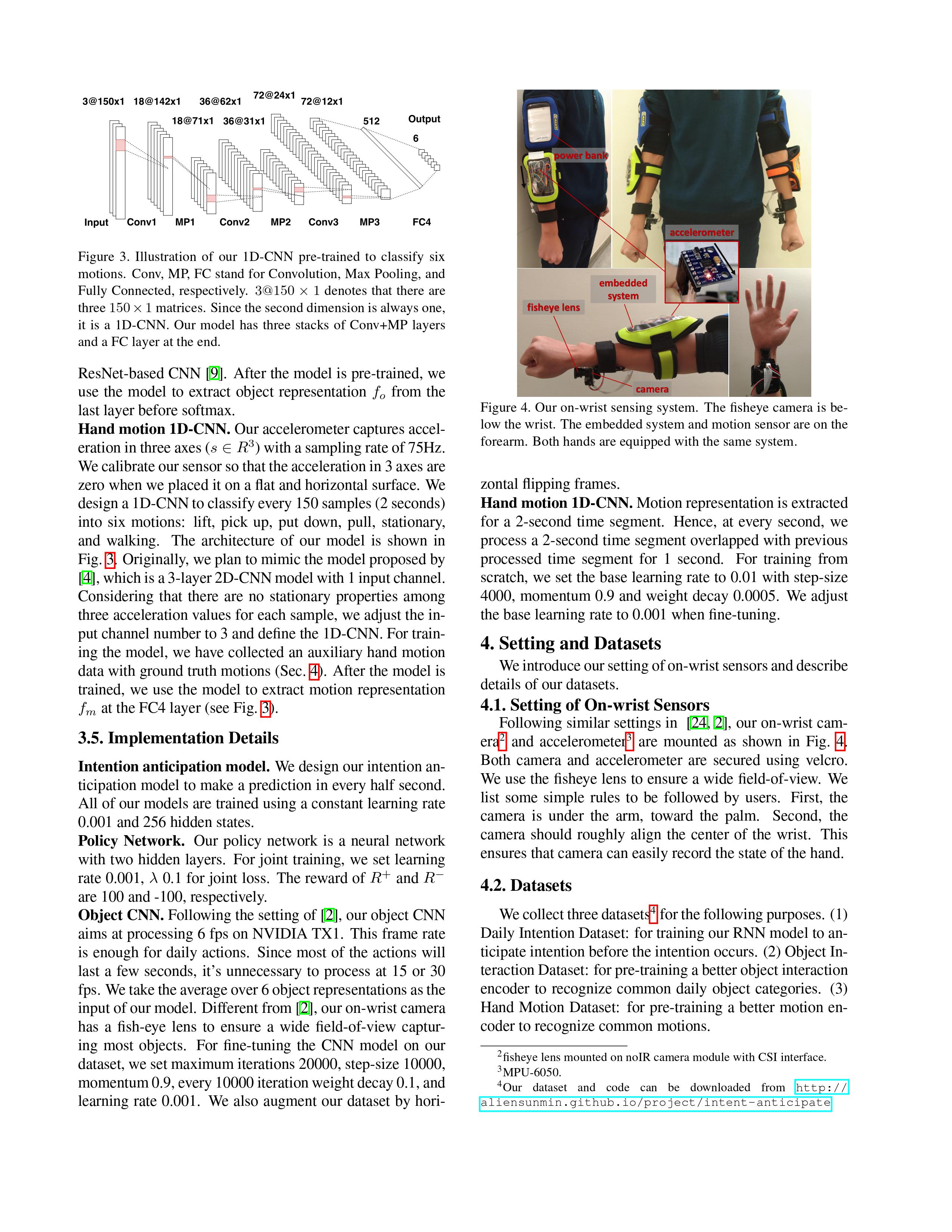

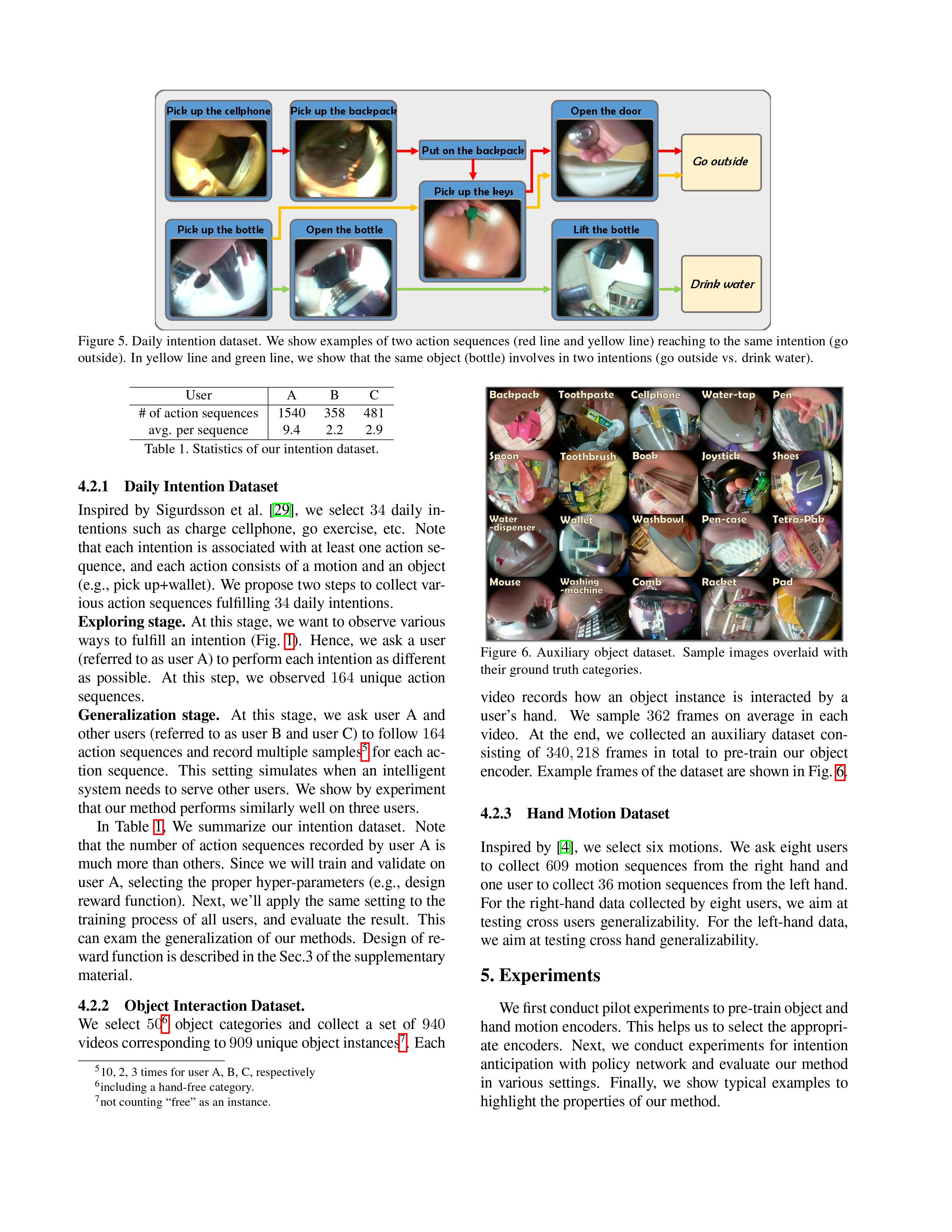

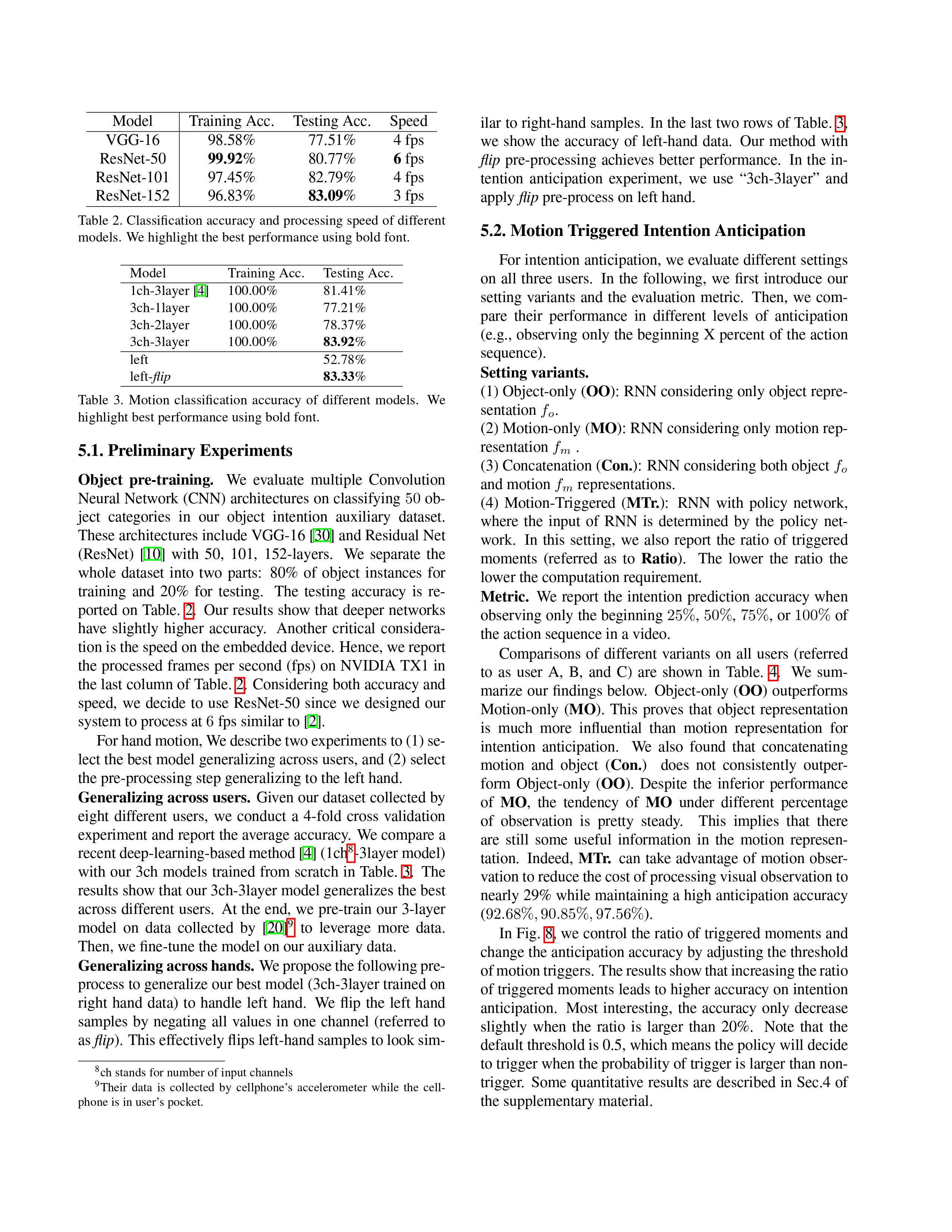

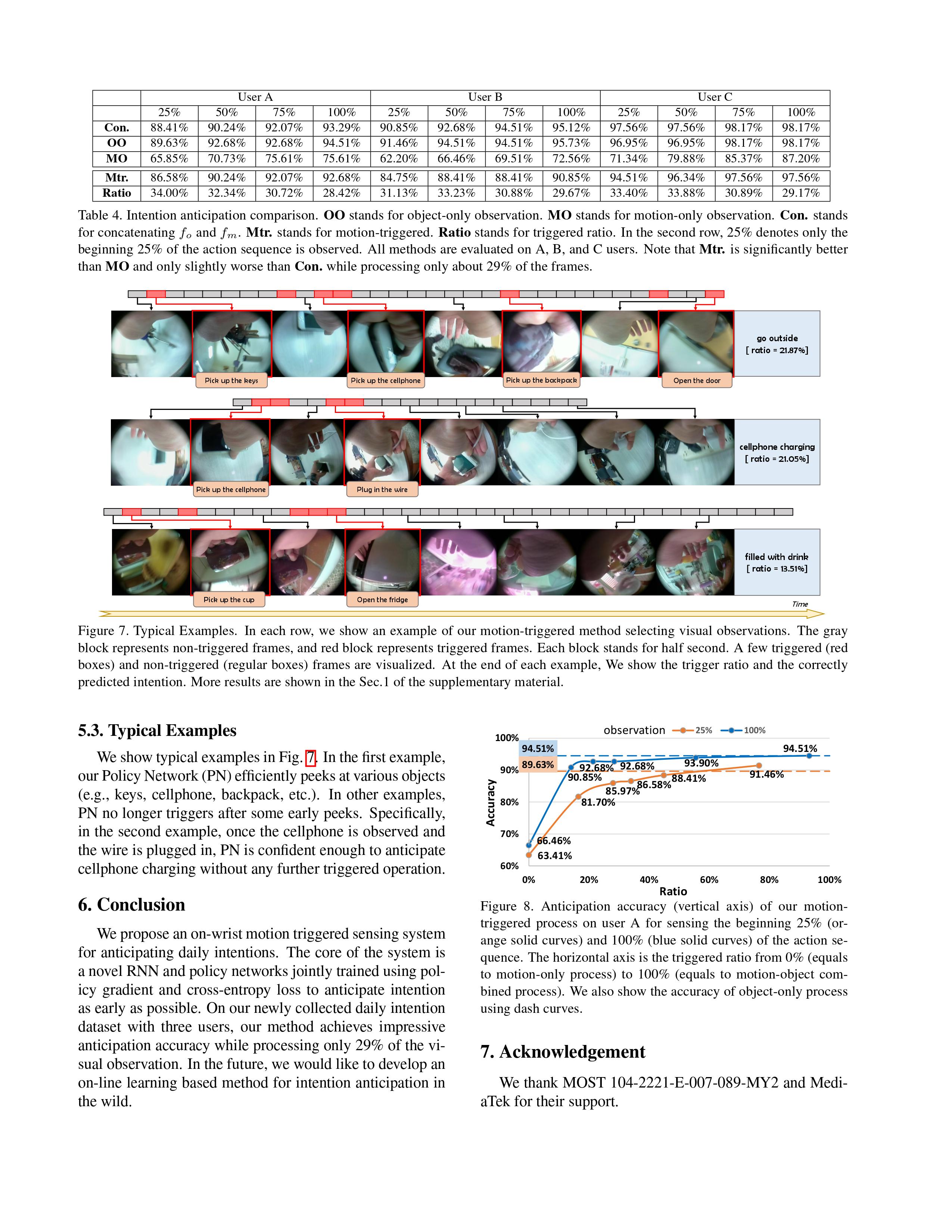

Anticipating human intention by observing one’s actions has many applications. For instance, picking up a cellphone, then a charger (actions) implies that one wants to charge the cellphone (intention). By anticipating the intention, an intelligent system can guide the user to the closest power outlet. We propose an on-wrist motion triggered sensing system for anticipating daily intentions, where the on-wrist sensors help us to persistently observe one’s actions. The core of the system is a novel Recurrent Neural Network (RNN) and Policy Network (PN), where the RNN encodes visual and motion observation to anticipate intention, and the PN parsimoniously triggers the process of visual observation to reduce computation requirement. We jointly trained the whole network using policy gradient and crossentropy loss. To evaluate, we collect the first daily “intention” dataset consisting of 2379 videos with 34 intentions and 164 unique action sequences. Our method achieves 92.68%, 90.85%, 97.56% accuracy on three users while processing only 29% of the visual observation on average.

Daily intention dataset

Daily object dataset

ICCV 2017

Anticipating Daily Intention using On-Wrist Motion Triggered Sensing

Tz-Ying Wu*,

Ting-An Chien*,

Cheng-Sheng Chan,

Chan-Wei Hu,

Min Sun

(*indicate equal contribution)

Spotlight Presentation

Paper (arXiv)

Paper (arXiv)

@inproceedings{WuChienICCV17,

title = {Anticipating Daily Intention using On-Wrist Motion Triggered Sensing},

author = {Tz-Ying Wu and Ting-An Chien and Cheng-Sheng Chan and Chan-Wei Hu and Min Sun},

year = {2017},

booktitle = {International Conference on Computer Vision (ICCV)}

}

Acknowledgement

Paper (arXiv)

Paper (arXiv)